Independent. Human-Curated. Established 2007.

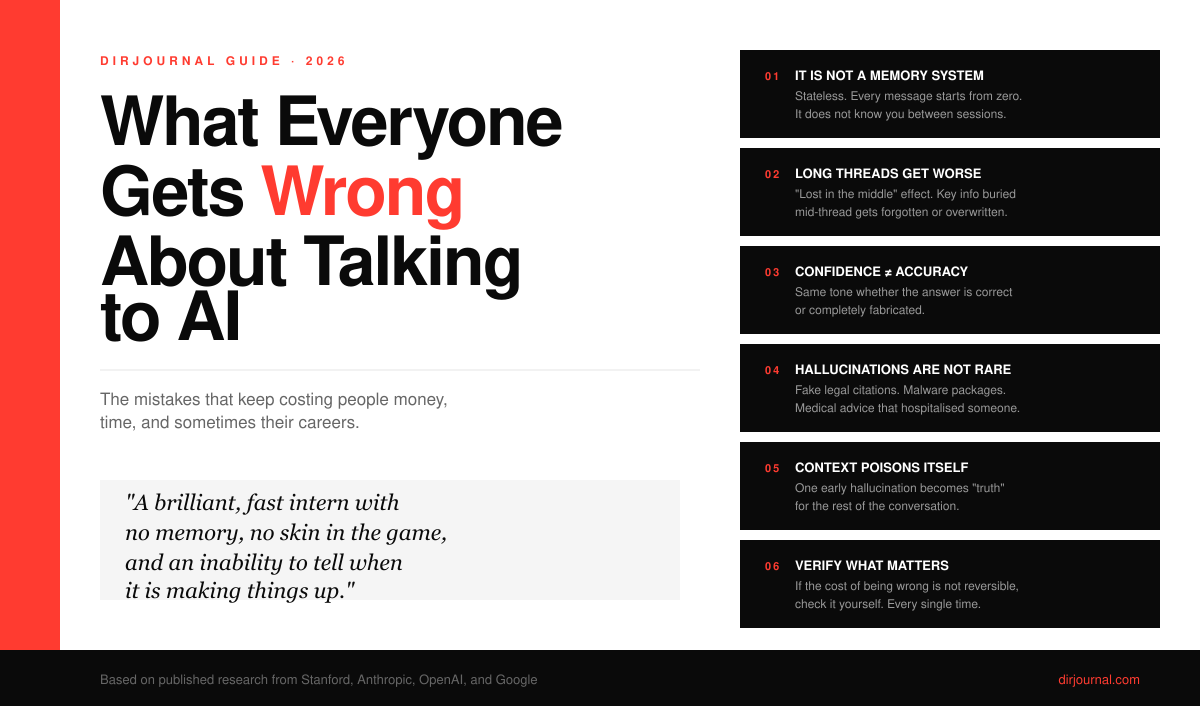

What Everyone Gets Wrong About Talking to AI (And the Mistakes That Keep Costing People Money, Time, and Sometimes Their Careers)

Key Topics in This Guide

- 1The Single Biggest Misconception: AI is Not a Memory System — covered in detail below

- 2Why Long Threads Get Worse, Not Better (the "Lost in the Middle" Problem) — covered in detail below

- 3Context Retention Compared: Claude Vs. ChatGPT Vs. Gemini — covered in detail below

- 4The Hallucination Problem is Worse Than People Think — covered in detail below

A few months back I was deep into a website rebuild, working through a single Claude thread that had been running for the better part of three weeks. I had convinced myself this was the smart way to do it. The longer the chat, I figured, the more "context" the model would have on the project. By week three it would basically be a teammate. It wasn't. Around the time the thread crossed a certain length I cannot pinpoint, the assistant started forgetting decisions we had locked in days earlier. It rewrote files using outdated column names. It cheerfully suggested I "add" a feature that already existed in the codebase, then a few prompts later wiped half the implementation while "cleaning up." When I pushed back, it apologized and confidently broke something else.

That mega-thread cost me close to a day of debugging. It also taught me something important about every AI tool people are using right now: the public mental model of how these things work is not just wrong, it is wrong in ways that have produced sanctioned attorneys, malware-infected codebases, and at least one man who replaced his table salt with a pesticide on ChatGPT's advice and ended up in a hospital bed.

This post is the long version of what I wish someone had handed me two years ago. It pulls together what Anthropic, OpenAI, and Google have published, what academic researchers have actually measured, and what I have learned the hard way running multiple production sites built with AI assistance. If you only read one piece on this topic, I want it to be this one.

The Single Biggest Misconception: AI is Not a Memory System

The thing most people imagine when they chat with Claude or ChatGPT or Gemini is something like a smart colleague who is gradually getting to know them. That mental model is the source of about 80 percent of the trouble.

What you are actually talking to is a stateless prediction engine. Every time you hit send, the model receives the entire visible conversation, runs it through a probability machine, and outputs the most plausible next chunk of text. It has no inner life between messages. It does not "think about your project" when you walk away. It does not get to know you, unless a specific memory feature is turned on, in which case it gets to know a tiny summary of you, not the real you.

Three implications fall out of this, and almost every misunderstanding people have can be traced to ignoring at least one:

- If something isn't in the current prompt or active context, it does not exist for the model.

- The model does not verify, fact-check, or "know" anything. It produces statistically likely text.

- Confidence in the output has no relationship to accuracy. The tone is the same whether the answer is correct or completely fabricated.

That last point is the dangerous one. Humans are wired to trust confident speakers. AI is, by default, a confident speaker.

Why Long Threads Get Worse, Not Better (the "Lost in the Middle" Problem)

Here is the part that surprised me the most when I started reading the research. The whole industry has spent two years bragging about context windows. Claude went to 1M tokens. GPT-5.5 went to 1M tokens. Gemini 3.1 Pro is at 1M tokens. The implication, sold loud and often, was that you could now feed in entire codebases or novels and the model would handle them gracefully.

The reality is more complicated. A landmark Stanford and University of Washington paper introduced what they called the "lost in the middle" effect. When you place key information in the middle of a long context, model accuracy drops sharply, even on models specifically built for long-context tasks. The performance curve is U-shaped: information at the beginning and end of the context gets remembered well, and the stuff in the middle gets fuzzy. Follow-up research from Snorkel AI confirmed the same pattern in GPT-4 and Claude 3 Opus.

Independent benchmarking from Elvex found that models often only retain reliable accuracy across about 60 to 70 percent of their advertised context window before performance starts breaking down. Claude has historically held up better than its competitors here, with less than 5 percent accuracy degradation across its full 200K range, but no model is immune.

There is also a phenomenon I learned to call "context poisoning." If the AI hallucinates a fact early in a thread and you don't catch it, that hallucination becomes part of its working "truth" for the rest of the conversation. Every subsequent answer builds on that false foundation. The error compounds. By the time you notice, half your conversation is built on something the model invented in message four.

The takeaway is counterintuitive but important: a clean five-message thread is almost always smarter than a sprawling fifty-message thread. More context is not more intelligence. It is often less.

Context Retention Compared: Claude Vs. ChatGPT Vs. Gemini

Here is a side-by-side that reflects where each tool actually sits in early 2026, drawn from each company's published specs and independent benchmarks.

| Capability | Claude (Opus 4.7) | ChatGPT (GPT-5.5) | Gemini (3.1 Pro) |

|---|---|---|---|

| Maximum context window | 1M tokens | 1M tokens | 1M tokens |

| Effective context (real-world) | Strongest sustained accuracy across full range | Strong on tasks ≤ 128K, degrades faster on long inputs | Largest by raw size, but uneven mid-context recall |

| Persistent memory between sessions | Yes (opt-in, summary based) | Yes (Memory feature, tracks preferences) | Yes (in Gemini Advanced, scoped) |

| Built-in web search | No (separate tool when enabled) | Yes (default for current questions) | Yes (deeply integrated with Google Search) |

| Coding context retention | Best in class for agentic coding workflows | Strong general coding, weaker on multi-file consistency | Fast and broad, occasionally inconsistent on tricky logic |

| Tendency to admit "I don't know" | Highest | Moderate | Lowest |

| Hallucination rate (commercial averages) | Low | Low | Moderate |

Two notes on this. First, all three companies update their models every few months and the gaps narrow constantly. By the time you read this, GPT-5.6 or Gemini 3.2 may have shifted things again. Second, raw context size is the least interesting number on this table. What matters is how reliably the model uses the context you give it. On that front, none of these tools deserve blind trust.

The Hallucination Problem is Worse Than People Think

Most people understand "AI hallucinates" as an abstract risk. Let me give you three concrete examples that should change how you think about it.

Example 1: Slopsquatting. Researchers at the University of Texas at San Antonio, Oklahoma, and Virginia Tech tested 16 large language models across 576,000 code samples in a 2025 USENIX Security study. Open-source models invented non-existent package names 21.7 percent of the time. Even commercial models like GPT-4 hallucinated packages in 5.2 percent of cases. They identified more than 205,000 unique fictional package names. Attackers have been registering these names on npm and PyPI and uploading malware under them. One hallucinated package called huggingface-cli was downloaded over 30,000 times in three months before anyone noticed it was fake. A security researcher who registered the hallucinated react-codeshift watched it spread into 237 GitHub repositories before he locked it down.

If you build websites using AI tools and copy-paste install commands without checking, you are part of this attack surface.

Example 2: Fabricated case law. The legal field has had a brutal two years on this front. The original Mata v. Avianca case in 2023 saw attorney Steven Schwartz fined $5,000 for filing a brief with cases that did not exist. It was supposed to be a one-time embarrassment. It wasn't. By 2025, Morgan & Morgan, the largest plaintiffs firm in the U.S. by headcount, was sanctioned $5,000 after one of its lawyers cited eight non-existent cases generated by AI. A California state court fined two law firms $31,000 in another AI-generated citation case. A researcher at HEC Paris, Damien Charlotin, has built a public database tracking these incidents. As of late 2025 it logged 486 cases worldwide.

A Stanford RegLab analysis found that some AI tools generate hallucinations in roughly one out of every three legal queries. One in three.

Example 3: Medical misinformation that lands people in hospitals. A case study published in the Annals of Internal Medicine documented an older man who asked ChatGPT how to cut sodium from his diet. The model recommended replacing his table salt with bromide salts. Bromide salts were used as a sedative around 1900. They are now found primarily in pesticides and pool cleaners. The man was hospitalized with bromide poisoning. He had assumed, reasonably, that an AI giving health advice would not direct him to consume something used to clean swimming pools.

These are not edge cases. They are the predictable output of a system that produces plausible-sounding text without ever checking whether it is real.

Where the Public Goes Wrong With AI in Personal Life

I split this into personal and professional because the misuse patterns look different.

On the personal side, the most common mistakes I see:

- Treating AI medical answers as a final verdict instead of a starting point for talking to an actual doctor

- Using it for legal interpretation and acting on the answer without ever speaking to a lawyer

- Asking for stock picks, crypto plays, or "should I buy this house" advice and weighing it as expert opinion

- Pasting personal information, financial details, or family medical history into chat tools without checking the platform's data policy

- Using AI as a therapist during a real mental health crisis without recognizing how badly that can go

- Believing the first answer on a contested topic instead of probing or cross-checking

- Assuming the AI is "the same" between conversations or even between days, when models update silently

- Sharing screenshots of chats as if the AI's confident phrasing equals authority

The fundamental error is treating the AI as a credentialed source. It has no credentials. It has no liability. It has no professional review board. When it gets something wrong, no one is on the hook except you.

Recommended for You

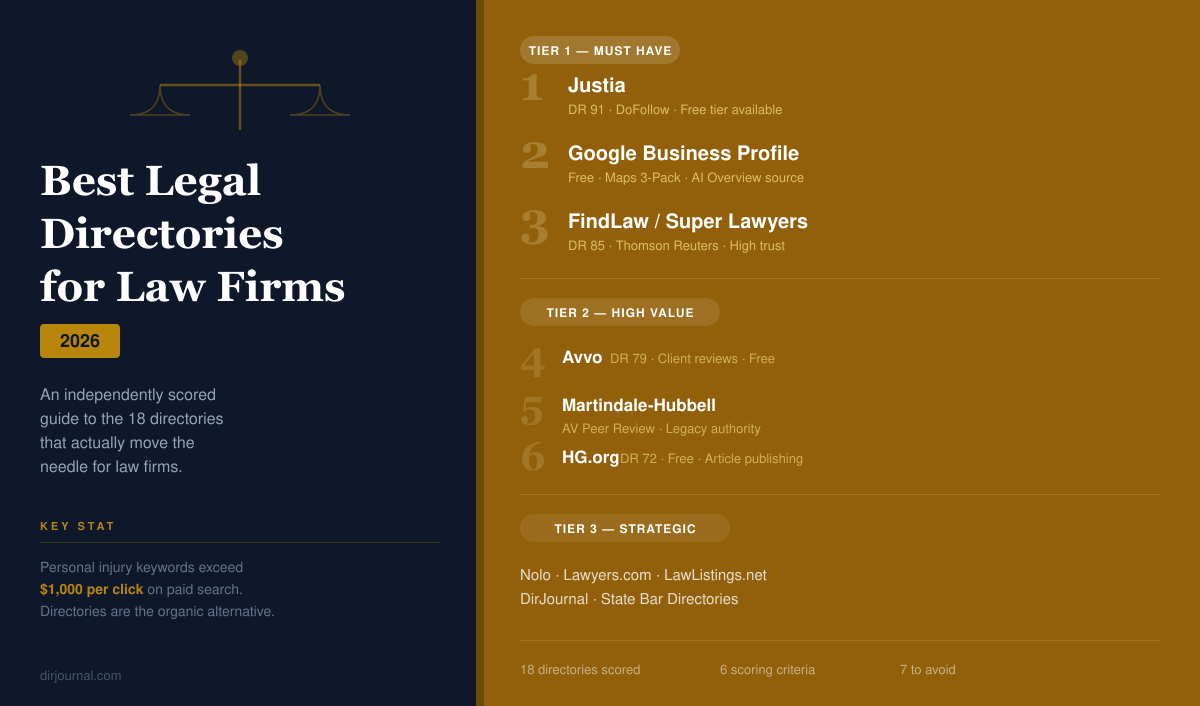

Best Legal Directories for Law Firms in 2026: Which Ones Actually Move the Needle

An independently scored guide to the 18 best legal directories — with domain authority, traffic figu

The 2026 Architecture Audit: How to Choose Between Agentic AI, LLM Fine-Tuning, and RAG

The question for most CEOs has shifted from "Should we use AI?" to "Which specific architecture will

Top 10 Brain Foods – Nourish Your Mind in 2026

Related Resources

Looking for verified service providers? Browse our directory categories below — all human-audited and trusted by decision-makers since 2007.